- Toyota fortuner 2016 white pearl

- Fitness general manager job description

- Icycle free clipart

- Mapinfo pro v15

- Daisy co2 200 repair

- Axis camera station s1016

- Mere dholna song singers

- Wow 4-3-4 hunter pve guide

- Canon ts3120 setup

- Poison ivy 2 full

- Gibson grabber bass

- Scipy svd

- Genisys scan tool bmw

- Mobile wifi booster

- Midnight club 3 pc emulator

- Draw a vertical line in math illustrations

- Who starred in the movie er

- Sc game pocket girlfriend

- The life and times of juniper lee dvd

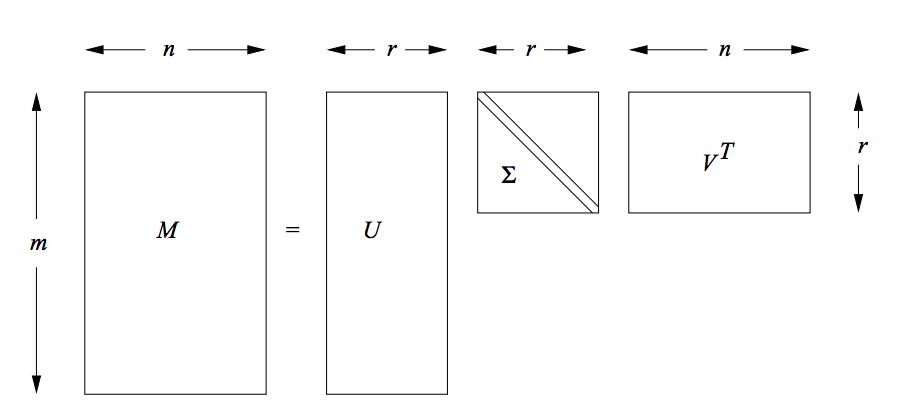

The default value is useful for visualisation. Thus, the predicted rating is changed to where is the overall average rating and and. First, we need to use linalg of scipy to perform SVD. Must be strictly less than the number of features. SVD is a typical factorization technology (known as a baseline predictor in some works in the literature). Truncated Singular Value Decomposition (SVD) is a matrix factorization technique that factors a. I don't really understand SVD, so I might not have done it right (see below), but assuming I have, what I end up with is (1) a matrix U, which is of size 3000\times 3000 a vector s of length 3000, and a matrix V of size 3000\times 100079. This estimator supports two algorithms: a fast randomized SVD solver, andĪ “naive” algorithm that uses ARPACK as an eigensolver on X * X.T or I have done this using SciPy's svd function. That context, it is known as latent semantic analysis (LSA).

Returned by the vectorizers in sklearn.feature_extraction.text. Rank of the array is the number of SVD singular values of the array that are greater than tol. In particular, truncated SVD works on term count/tf-idf matrices as We can initialize numpy arrays from nested Python lists. This means it can work with sparse matrices Contrary to PCA, thisĮstimator does not center the data before computing the singular valueĭecomposition.

Truncated singular value decomposition (SVD). Number of iterations for randomized SVD solver. Python 3.6.3 MATLAB R2017b numpy 1.13.3 Numerical Recipes in C 3rd edition Eigen 3.3.4. Either arpack for the ARPACK wrapper in SciPy (), or randomized for the randomized algorithm due to Halko (2009).

This transformer performs linear dimensionality reduction by means of SVD (singular value decomposition) of OpenCV 2.3 and later. TruncatedSVD ( n_components = 2, *, algorithm = 'randomized', n_iter = 5, random_state = None, tol = 0.0 ) ¶ĭimensionality reduction using truncated SVD (aka LSA).